Download entire website or download web pages with SurfOffline - convenient website downloader with easy-to-use interface SurfOffline 2.2 - Website downloaderIf you need to download a website, use the website downloader SurfOffline. Weoffer you a free 30-day trial period to test SurfOffline.Click on picture for full-size.SurfOffline is a fast and convenient website download software.The software allows you to download entire websites and download web pages to your local hard drive.SurfOffline combines powerful features and a convenient interface.The SurfOffline wizard will allow you to quickly specify the website download settings.After the website has been downloaded, you can use SurfOffline as an offline browser and view downloaded web pages in it.

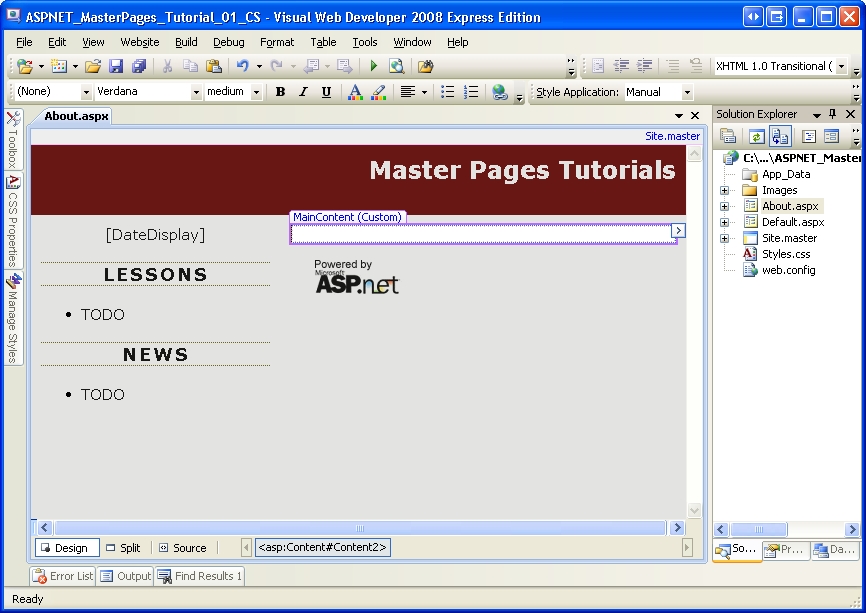

Lets see how to import data from a website with Excel VBA code. How to Extract data from Website to Excel using VBA?HTML screen scraping can be done by creating instances of the object “MSXML2.XMLHTTP” or “MSXML2.ServerXMLHTTP”, as explained in the code snippet below.Use this code in VBA to pull data from website i.,e HTML source of a page & place the content in a excel sheet. Create new excel workbook. Press Alt + F11 to open VB editor. Copy paste the below code in space provided for code. Change the URL mentioned in the code.

Execute the code by Pressing F5. End SubIf you want to know how to parse the HTML elements from the web page read this article:It explains, how to process each HTML element, after the web page content is extracted.Also Read: Clear Cache for every VBA Extract Data from WebsiteUse this below code every time, before executing the above code. Because with every execution, it is possible that extracted website data can reside in cache.Also Read:If you run the code again without clearing the cache, then old data will be displayed again. To avoid this, use the below code.

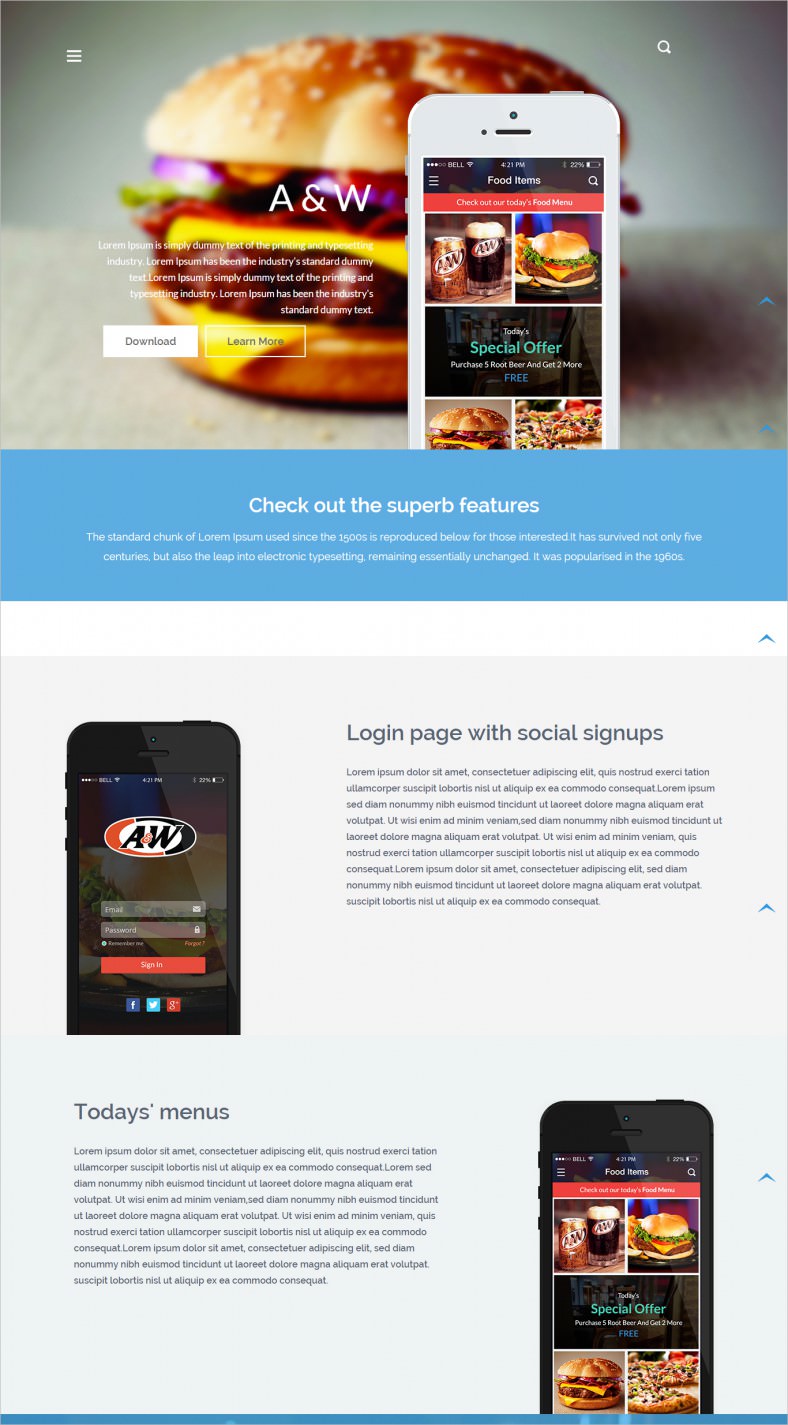

Download From Website Online

Shell 'RunDll32.exe InetCpl.Cpl, ClearMyTracksByProcess 11'Note: Once this code is executed, it will clear the cache in your web browser. So, please execute this with caution. All your previous sessions and unfinished work will be deleted from browser cache. Detect Broken URL or Dead Links In WebpageSometimes we might need to check only if the URL is active or not.

During that time, just insert the below checking IF condition just after the.Send command.If the URL is not active, then this code snippet will throw an error message. End IfAlso Read: Search Engines Extract Website DataWeb browsers like IE, Chrome, Firefox etc., fetch data from webpages and presents us in beautiful formats.Why do we need to download Website data with a program? Even few crazy programmers develop such applications & name it as Spider or Crawler? Because it is going to Crawl through the web and extract HTML content from different websites.Internet Search Engines: Google, Bing etc., have crawler programs that download webpage content and index.

Once in awhile one finds one's self presented with a lot of choices: Links to 100 MP3s of live performances by one's favorite band, or 250 high-res photos of kittens, or a pile of video files. You could go through each link, one by one, right-clicking and choosing Save As., but you've got better things to do, and luckily there are free mass-downloading browser add-ons that will do the job for you. If you use FirefoxFirefox users have available to them the excellent and free add-on. Once you've installed DownThemAll, you'll have a few new options in Firefox. Under your Tools menu and your right-click context menu you'll get options that say 'DownThemAll!' And 'dTaOneClick!'

The first option opens the main DTA dialog. This will show you a list of all the files and pages the current page links to.

Here you can select which items you want to download and choose where the downloaded files are saved on your hard drive. Below, the filtering options let you choose certain kinds of files (e.g. Videos or images), or something more specific like.mp3 for all MP3 files.

If you want to get really specific, you can check 'Reg. To select files with a.At the top of the dialog you can also choose 'Pictures and Embedded' to instead see a list of all images, videos, or other files that are embedded in the current page.

Once you've chosen the files you want to download, you can click on 'Start!' To begin downloading them all or 'Queue!' To just mark them for later downloading. Now you'll get the download screen. This is pretty straightforward: It shows you all of the files that are currently being downloaded or in the queue.

Here you can pause and resume downloads or change their order.The other option I mentioned, 'dTaOneClick!,' is just a version of the above for impatient people. You can right-click on a link, choose 'Start link with dTaOneClick!,' and it will start downloading it without bothering you with options.If you start poking around DownThemAll's preferences and using the context menus you'll see that it has lots of settings that you can tweak til your heart's content. But I've covered the basics, so start downloading!

If you use Internet ExplorerQuality add-ons aren't quite so plentiful for Internet Explorer as they are for Firefox, especially in the free department, but there are a few gems out there. For mass downloading I recommend. As the name implies, there is a more featureful, but for our purposes the Basic version will suffice. PimpFish adds a toolbar to Internet Explorer and some simple options to your right-click context menu like 'Grab movies on this page' and 'Grab pictures on this page.'

As the Database Manager for a boutique management consultancy our data needs are constantly growing and evolving and having tried numerous other solutions I have not found another data mining tool which so ably combines the versatility and power of ParseHub. It comes with an impressively easy to use front end which has allowed even an inexperienced user such as myself to make use of whatever data, irrespective of its format or volume, which I can find. I also make good use of ParseHub's ability to schedule and repeat runs over time and all of this combined with a constantly supportive Customer Service team make ParseHub one of the most useful data tools at my disposal.

—Patrick Moore, Database Manager at. We were one of the first customers to sign up for a paid ParseHub plan. We were initially attracted by the fact that it could extract data from websites that other similar services could not (mainly due to its powerful Relative Select command). The team at ParseHub were helpful from the beginning and have always responded promptly to queries. Over the last few years we have witnessed great improvements in both functionality and reliability of the service. We use ParseHub to extract relevant data and include it on our travel website. This has drastically cut the time we spend on administering tasks regarding updating data.

Our content is more up-to-date and revenues have increased significantly as a result. I would strongly recommend ParseHub to any developers wishing to extract data for use on their sites.

—David Mottershead, Owner at.

. Click the Library button, click History and select Clear Recent History. In the Time Range to clear: drop-down, select Everything.

Below the drop-down menu, select both Cookies and Cache. Click Clear Now.If clearing Firefox's cookies and cache didn't work, it's probably a sign that there is a problem with the website itself. In that case you'll just have to wait for it to get fixed. With big sites like Twitter or Facebook this may only be a few minutes.If you don't see any of the error messages above, check to see if any of the specific problems below match what you see: The website loads but doesn't work properlyIf the website doesn't look right or doesn't work the way it's supposed to, you should check out the following articles.

RSS Feed

RSS Feed